As we navigate the midpoint of 2026, the industrial landscape has reached a critical inflection point. For the past two years, the conversation was dominated by the “Year of the Agent”, a rush to deploy specialized AI bots to summarize reports or provide chat-based interfaces for maintenance manuals.

However, as Verdantix recently highlighted in their Global Corporate Survey 2026: Industrial Transformation Budgets, Priorities And Tech Preferences, the “Surface AI” era is ending. While 84% of EHS and Operations leaders have prioritized AI adoption, a staggering 89% of decision-makers identify architectural complexity as their primary barrier to scaling.

The industry is realizing that a fleet of disconnected agents is reaching a limit within a complex environment where context is a differentiator factor. To bridge the trust gap and achieve true ROI, the movement has shifted toward Systemic Solutions.

The rise of Agentic AI

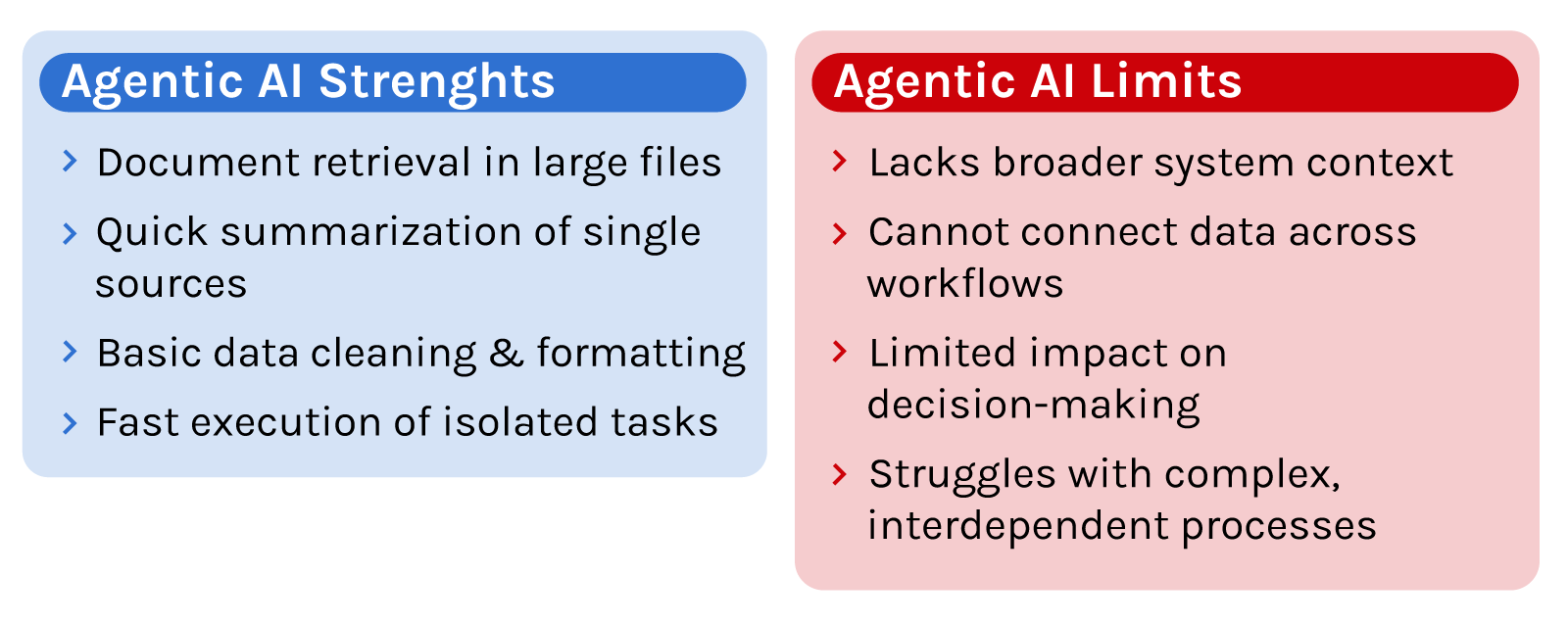

While we are moving toward a more connected future, it is important to recognize that standalone AI agents still play a valuable role. There are many everyday tasks where you don’t necessarily need a full-scale systemic solution to see a benefit.

For instance, they excel at document retrieval, such as helping an engineer find a specific safety rule or torque value hidden in a 500-page manual. They are also perfect for initial summarization, turning a single inspection log or meeting transcript into clear bullet points in seconds. Even for basic data cleaning, a simple coding agent can quickly write a script to reformat a spreadsheet. In these specific cases, a standalone agent is fast, effective, and gets the job done without needing to understand the rest of the system.

However, Agents eventually hit a limit. They can tell you what’s inside a single file, but they can’t see the bigger picture. It doesn’t know how that one piece of information connects to the rest of your operation or how it impacts your next steps.

The moment your work needs true context, such as understanding how past data, current situations, and future workflows all tie together, a standalone agent isn’t enough. That is where you need a systemic foundation.

Defining the Nervous System: What is Systemic AI?

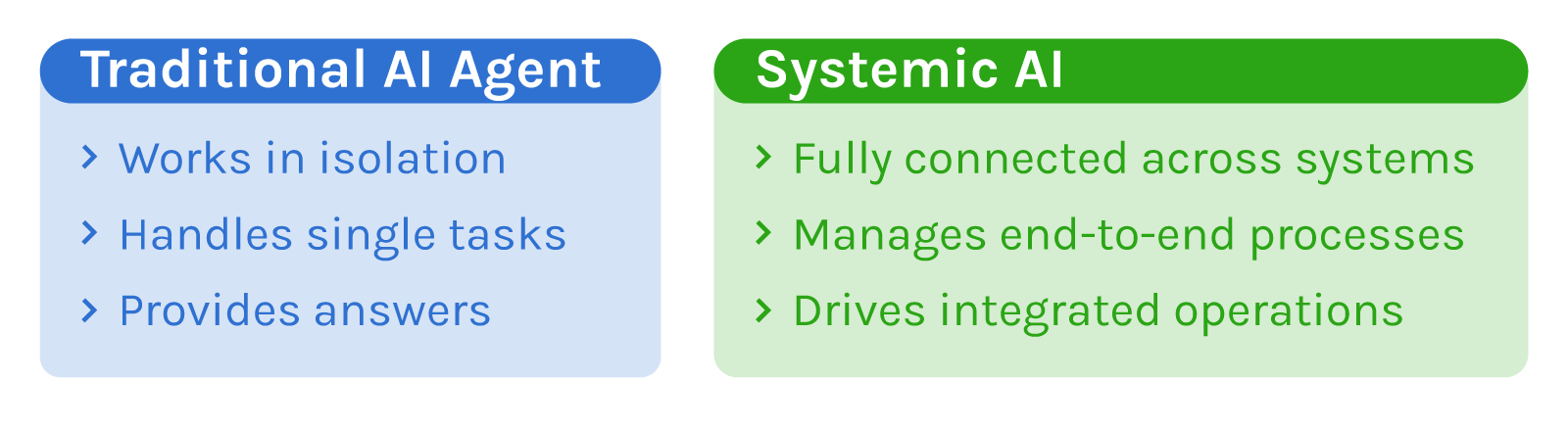

If a standard AI agent is a high-performing specialist working in a silo, Systemic AI is the entire organizational nervous system. Think of Systemic AI as the difference between a single specialized tool and a fully connected factory floor. While the last few years were all about “Surface AI”, such as chatbots and copilots that are able to handle one task at a time, the industry is moving toward something much deeper.

Systemic AI comes into play as the backbone of an entire operation. It ties together data, digital twins, and workflows into one smooth process. Instead of just giving you an answer to a specific question, it manages the entire cycle from start to finish. It’s the shift from having an isolated AI assistant to having an AI-powered foundation that keeps everything running and connected.

Why “Agents” Are Not Enough for the Industry

The core limitation of an agent approach, as mentioned, is its lack of context. In this manner, the industry is moving past the initial phase of AI hype and entering a stage where the internal mechanics of a system and whether there is a genuine business need for the technology, and if the organization has the right governance and capacity to actually handle it, are what actually matters.

We are seeing a shift in the market in how companies invest. Moving from an era of buying software and entering into multi-year commitments, helping fund new product features, and sharing their private data. This shift turns vendors into essential partners who manage and protect an organization’s specific data and core processes.

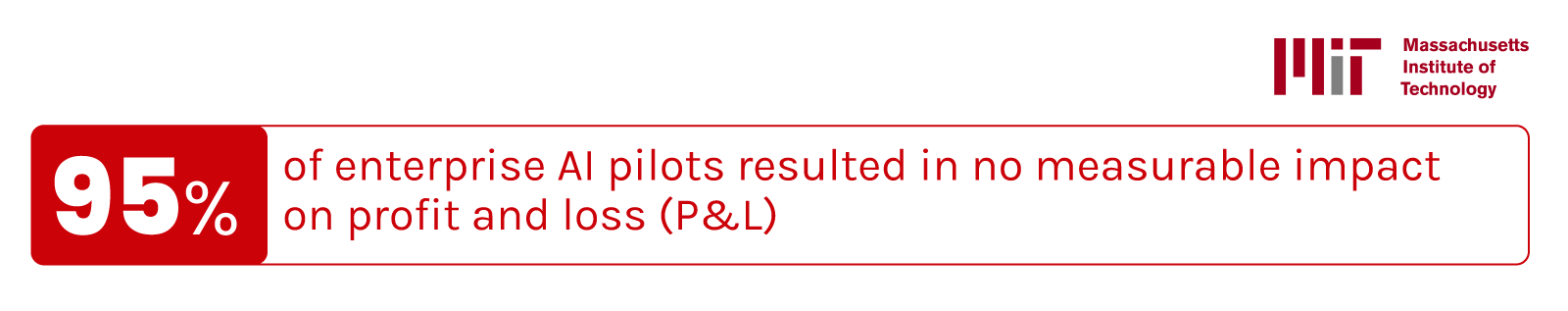

However, this deeper integration has led many to hit what Verdantix calls an “ROI wall”. While adoption metrics are high, the actual impact on the business is often weak. A 2025 MIT study found that 95% of enterprise AI pilots resulted in no measurable impact on profit and loss (P&L), with only a small minority generating repeatable value.

Because of this lack of results, leadership behavior is changing. The broad ” AI transformation” narratives are being rapidly replaced by a much more selective, ROI-driven approach.

The Survival of the Integrated

As the initial hype drops, the AI market is going through a structural contraction. Basic AI tools that only offer simple chat features are being closed or taken over as they struggle to survive without investment. What remains is a smaller but much more reliable ecosystem focused on clear results. This new landscape is led by:

- Integrated Suites: Platforms that combine workflow, data, and agentic capabilities into one coherent stack.

- Vertical Specialists: Experts who solve very specific, high-value problems within a domain.

To break through the “ROI wall,” companies need more than just a smart bot; they need a way to organize their entire data environment so the AI actually has a reliable foundation to work with.

The Movement: From Fragmented Agents to Systemic Architecture

The market data tells a clear story. The Enterprise AI Platform market is projected to surge to over $50 billion by 2030. This growth isn’t being driven by simple chatbots, but by platforms that can ingest, process, and act upon the massive data that has historically burdened heavy industry.

Verdantix’s latest analysis suggests that the most successful companies are moving away from the slow, manual effort of trying to connect data points from different sources by hand.

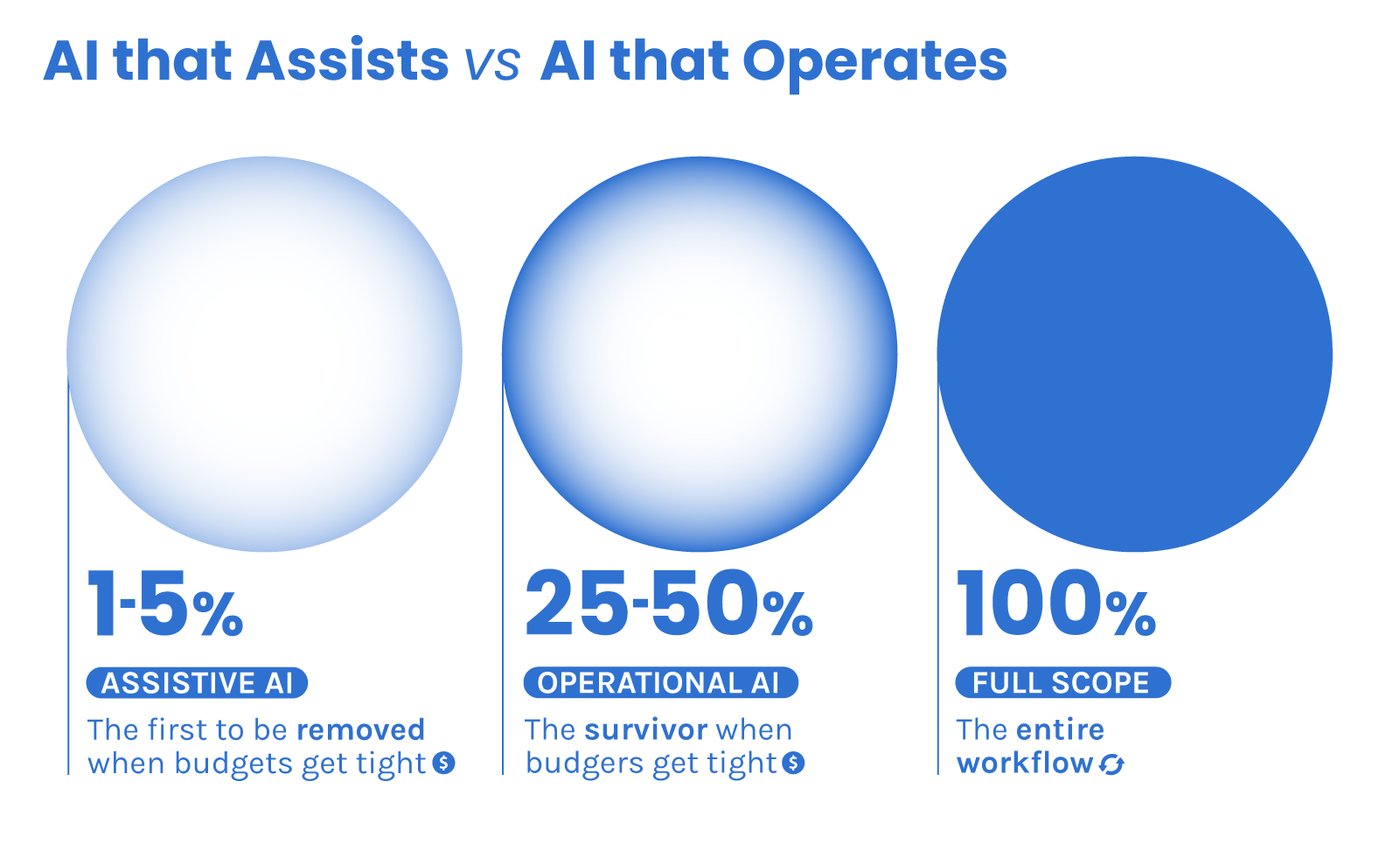

Instead, they are adopting integrated environments where AI is part of the core architecture. When budgets get tight, general-purpose “copilots” that only handle 1% to 5% of a task are the first to be removed. The real survivors will be the platforms that take ownership of 25% to 50% of a specific workflow.

Achieving this requires access to what we call “dark matter” data (things like equipment logs, edge telemetry, and complex industrial files). A true systemic solution doesn’t just provide insights; it handles the full loop from collecting data to making a decision and delivering a final outcome.

Because of this, the focus is shifting away from launching generic new features. Instead, the goal is now to deepen the automation of these core workflows. This involves solving for those rare and complex situations that usually disrupt operations, connecting more deeply with record systems, and increasing the level of autonomy wherever safety and governance allow.

Vidya’s platform as a Systemic solution

Unlike fragmented AI agents, Vidya’s platform is designed as an end-to-end ecosystem for Digital Lifecycle Management. The real difference lies in its architecture. The platform is built on a technology stack that prioritizes interoperability. This means the platform connects directly with existing systems and data sources to become a core part of an integrated suite.

The foundation of Data

A systemic solution depends on how data moves across the operation. In most industrial environments, critical information is spread across systems like CMMS, ERP, P&IDs, isometrics, and inspection reports. Each of these holds part of the picture, but they are not designed to work together. This fragmentation forces teams to manually connect information every time a decision is required.

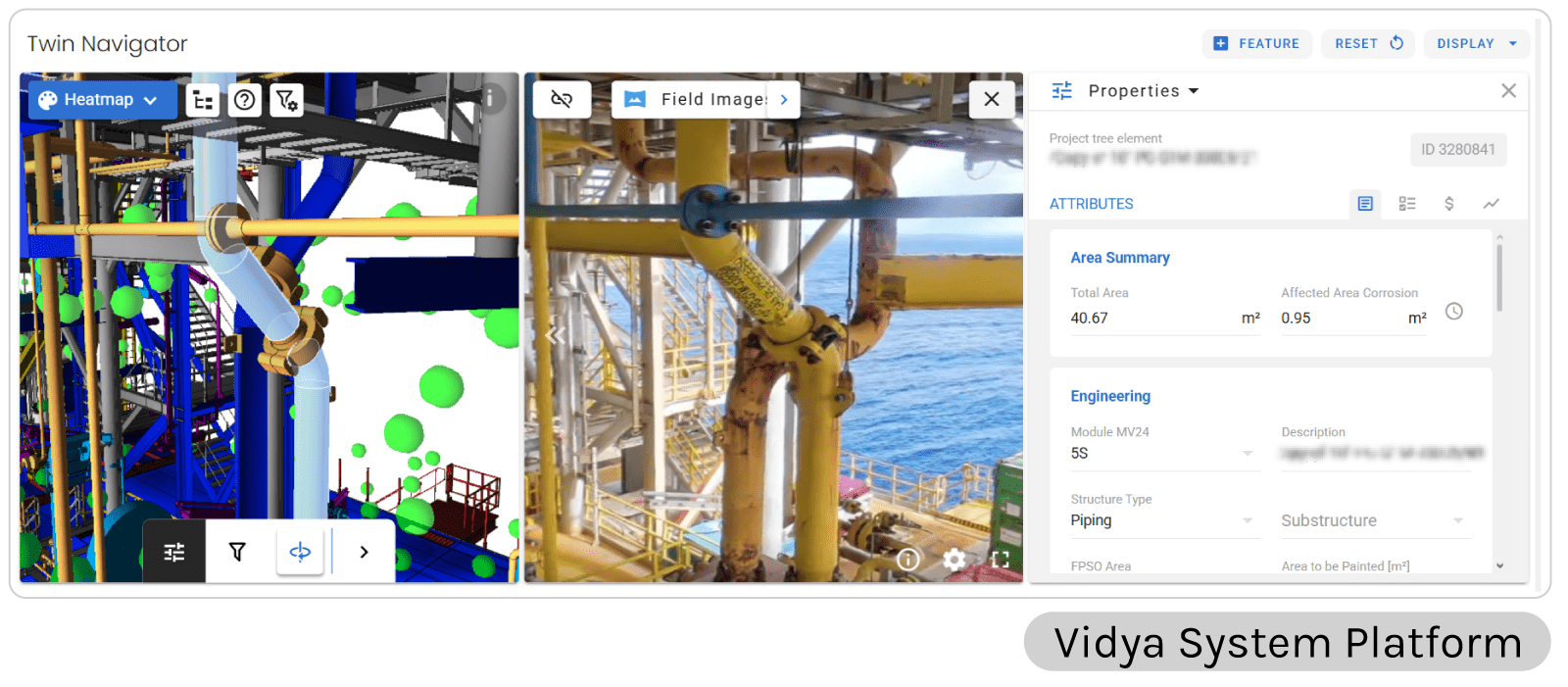

For this reason, at the heart of Vidya’s systemic solution is the Vidya System Platform (VSP), consolidating these sources into a single environment. Thus, the system functions as a sophisticated Visual Asset Management suite that provides the spatial context agents lack, and also interoperability, allowing the platform to be deployed across multiple use cases.

For that to be possible, Vidya “pins” critical information directly onto the 3D components of the asset. Each document, inspection, or specification is associated with its exact location in the field.

This changes how data is used. With that contextualized in a 3D viewer providing visualization of core information, users can access technical specifications and view the history of any component with a few clicks.

And access extends beyond the control room. With mobile support, teams can overlay digital intelligence onto physical assets, ensuring decisions are made based on the most accurate, contextualized data available.

The video demonstrates Izzy, an industrial-grade AI agent orchestrator to streamline asset monitoring and data retrieval. Through secure data ontology and RAG, Izzy structures complex industrial data into accessible, query-based insights. In the video it is possible to see how it operates orchestrating agents to execute advanced industrial tasks, including automated issue detection, updates on activities and 3D heatmap generation.

Processing Reality

Data structured in context is only useful if it reflects what is actually happening in the field. In industrial environments, this is a constant challenge. Assets change over time, and documentation rarely keeps pace. To address this, Vidya uses its Spatial processing capabilities to bridge the gap between the virtual representation and the field by processing large volumes of data from inspections, reality capture, and field surveys. That becomes imperative in the context of complex industrial environments since reality is constantly changing.

However, raw imagery alone has limited value unless it can be positioned correctly within the asset. The platform is able to do so by processing high-definition imagery and applying a spatial computing framework to address data and imagery in the right location on the field. Instead of isolated photos, the result is a navigable 3D model of the facility with relevant information linked to each component.

Handling this amount of data at scale requires significant processing capacity. High-resolution imagery, combined with continuous data contextualization, generates volumes that cannot be managed through manual workflows. High-performance computing enables this data to be processed, aligned, and updated continuously, keeping the representation usable as conditions evolve.

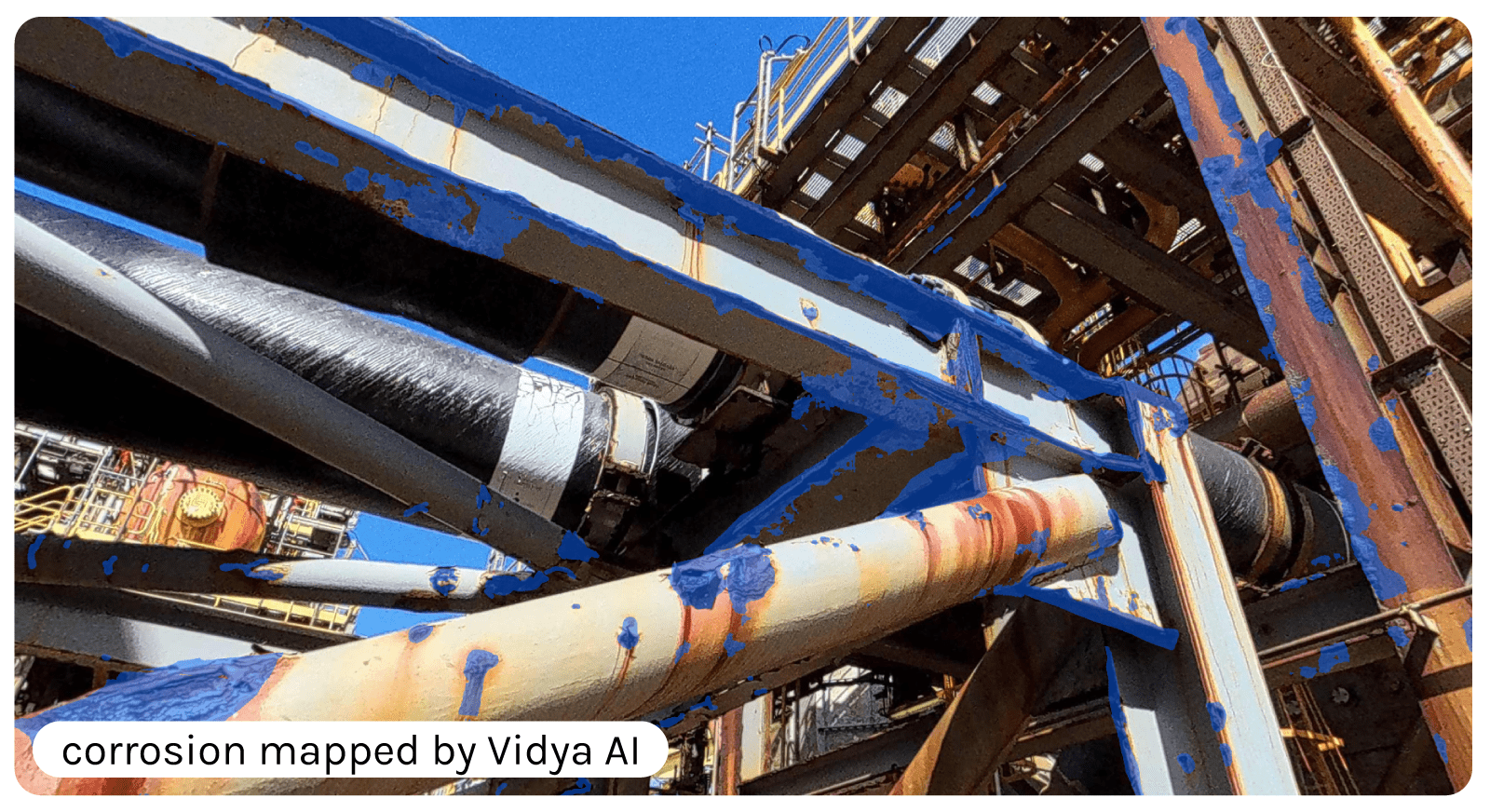

Once this spatial foundation is established, AI can operate with context. Visual models analyze the imagery directly within its location, identifying patterns such as corrosion, coating degradation, or structural deformation.

By being able to create a living “As-Built” environment, the system ensures that engineers are no longer working with outdated paper drawings or missing field data, but with a realistic digital representation of the asset’s current state.

The Road Ahead

We are moving out of the AI investment supercycle, a period where capital and hype moved significantly faster than actual productivity gains. As the market enters a phase of disciplined rebalancing, the focus is shifting toward value-driven deployments that can survive a strict budget review.

This market change is likely to trigger a “software extinction event” for lightweight AI tools that are not able to demonstrate organizational value. In this new landscape, the advantage belongs to integrated suites and vertical specialists who don’t just provide insights but take ownership of an entire workflow from start to finish.

By focusing on deep automation and resilient, self-sustaining business models, these systemic platforms provide the industrial-grade trust and repeatability that the next phase of the market demands.

Ultimately, the difference between a company that just uses AI and a truly AI-powered operation comes down to the foundation. While standalone agents are useful for small, isolated tasks, they cannot act as the organizational nervous system needed to manage high-stakes industrial assets.

By building on a foundation that connects data and workflows in context, companies can move past the hype. It is time to stop adding more bots to the pile and start building a deeper, systemic foundation that turns AI from an experimental cost into a structural asset.